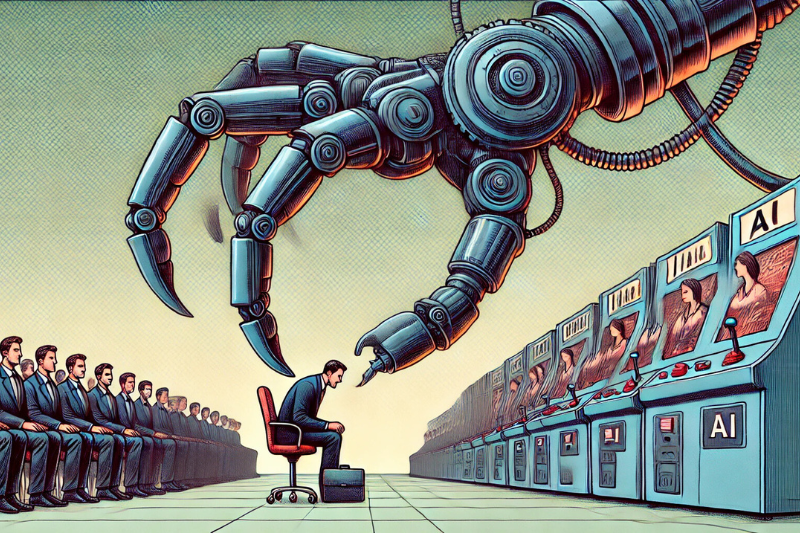

Home Artificial Intelligence (AI)When AI plays favourites: How algorithmic bias shapes the hiring process

HR News Canada is an independent source of workplace news for human resources professionals, managers, and business leaders. Published by North Wall Media.